Part I - The progression of AI

A brief history and a look at the surge in investment

Author’s note: For the past year or so, I have been on a writing hiatus while I learn to be a father and balance life at a startup. I have been quietly writing and researching a lot, but I have not been publishing. Today, I make a jump back into it, and I hope to keep it going!

Throughout 2022 and 2023, the focus within the technology community shifted from crypto maximalism to the surge in artificial intelligence (AI). The two hype cycles are very different. Crypto continues to struggle with mass adoption and use cases (Note: I wrote a piece on crypto for the Archive; I am a fan, but so far, it has simply not shown the value relative to investment), but AI has been providing value in less apparent ways for years (e.g., Google Search).

With the introduction of GPT-3, ChatGPT, GPT-4, Gemini 1.5, and Claude 3.0, the industry appears to have hit a true inflection point. ChatGPT has the fastest-growing user base in history.

I believe the AI hype (market pricing excluded) is real, and the next few decades of job and value creation will occur here. For the past year, I have put some of my writing aside while I focus on building at Replit, reading on and building with AI, and learning to become a parent.

AI is new for everyone. That’s the wonderful part about it. The frontier is being built now, so diving into this world and learning can allow you to differentiate yourself. You may feel behind, but you are not. The world is just moving fast.

To help both myself and non-technical readers understand all of this, I am planning to get back to my writing. I am starting with this multi-part series on AI. Hope you enjoy!

The origination of AI

With the progression of machines, almost immediately came the murmurs of existential threat for humans. The first depictions of machines with human intelligence began appearing as early as 1927, starting with a German film called Metropolis. Science fiction continued to build on these concepts, including a series of short stories in the 50s by Isaac Asimov called I, Robot, which ultimately led to the Hollywood film featuring Will Smith roughly a half-century later.

One of the first real steps came in 1950 with English computer scientist and mathematician, Alan Turing. The Turing Test (also called the Imitation Game) requires a remote interrogator to interpret blind responses from two participants. One is a human. One is a machine. If the interrogator is not able to identify the human, then the machine passes the Turing Test.

In 1955, computer scientists John McCarthy, Marvin Minsky, Nathaniel Rochester, & Claude Shannon officially coined the term "artificial intelligence" in a famous proposal called, “A proposal for the Dartmouth Summer Research Project on Artificial Intelligence” for an upcoming Dartmouth conference.

Just one year later, Allen Newell, Herbert Simon, and J. Clifford Shaw created The Logic Theory Machine. This is referred to as the first machine of artificial intelligence. Together, these seven computer scientists are often considered founding fathers of artificial intelligence as a field These three would also release a paper on a new machine in 1959 called the General Problem Solver (GPS).

At this same time, John McCarthy began focusing on AI, specifically an idea he had called the Advice Taker. He liked the list-based idea, but he was not a fan of IPL. Over the course of two years, McCarthy and some students built a new language that introduced functions called List Processor (LISP). LISP continues to be used today, and Paul Abrahams of Courant Institute says, “Lisp was the work of one mind… basically Lisp was John McCarthy’s invention” (Source: The Dream Machine).

While not specific to AI, in 1960 JCR Licklider released a paper called, "Man-Computer Symbiosis". Until that point, most people thought of computers as machines predetermined to do a task. This paper was revolutionary in changing the paradigm of how we think about two-way communication and interaction with computers. Two great quotes to picture how Licklider thought of Humans and Computers:

“The tree and the insect are thus heavily interdependent: the tree cannot reproduce without the insect; the insect cannot eat without the tree; together, they constitute not only a viable but a productive and thriving partnership. This cooperative… is called symbiosis”

“Humans will set the goals and supply, the motivations… They will ask questions…The equipment will answer the questions”

Throughout the remainder of the 20th century, artificial intelligence continued to advance, but by our terms, it was at a relatively slow pace. There were mostly advances in networking, like the creation of the internet, time-sharing, packet switching, etc. All of this was required to build a larger foundation. In many ways, the ideas and promise of AI outpaced the infrastructure. A few milestones throughout this time:

The First Chatbots (1960s): ELIZA was created by MIT computer scientist Joseph Weizenbaum. It was the first real chatbot that enabled human-machine conversation.

The AI winter (1970s & 1980s): For a few decades, computer science pushed heavily on artificial intelligence, primarily making smaller incremental progress (relative to some of the milestones and step-changes we have seen).

Deep Blue Chess Machine (1997): Developed for ~12 years at Carnegie Mellon University, Deep Blue was an IBM supercomputer that played chess. After facing Garry Kasparov and losing in 1996, the computer system beat Kasparov in 1997. A computer beat a world champion human in a complex game like Chess.

Nvidia invented the Graphic Processing Unit (GPU - 1999): None of these innovations in AI would have been possible without the rapid advancement in computers we have seen over the past half-century. GPUs are constructed to conduct several processes in parallel (vs. a CPU running them serially). They were primarily designed for the gamings industry. In the early 2000s, scientists realized they actually were quite good at running parallel calculations for simulators. Today, all training and inference for Large Language Models (LLMs) run on GPUs, and Nvidia is still the dominant player. For a deep-dive on GPUs, check out the Acquired Podcast on Nvidia.

Meet Siri (2010): Apple first introduced Siri in 2010. The idea of talking to a computer was still fairly novel, and within years, Siri would be in the pockets of hundreds of millions of users. Watson was also released, and Alexa would be released a few years later (in 2014).

AlexNet (2012): ImageNet was presented as a visual object recognition database in 2009. It was a milestone itself, but the greater step occurred three years later when Alex Krizhevsky, Ilya Sutskever (now Chief Scientist of OpenAI), and Geoffrey Hinton created AlexNet. AlexNet competed in the ImageNet Large Scale Visual Recognition Challenge, and it achieved top-5 error of 15.3%, which was +10 percentage points better than the runner-up. They achieved this by training on GPUs.

From 1997 to 2012, AI seemed to begin accelerating, but in 2017, the entire space changed forever. Google Brain researchers released a paper called "Attention Is All You Need."

We propose a new simple network architecture, the Transformer, based solely on attention mechanisms, dispensing with recurrence and convolutions entirely. Experiments on two machine translation tasks show these models to be superior in quality while being more parallelizable and requiring significantly less time to train.

The Transformer was born. Transformers use a multi-layer process. In a later part, we will talk about the actual steps for a Transformer-based Large Language Model (LLM), but for now, the key insight to understand is attention. Transformers provided an architecture that allow models to better understand words based on the surrounding words.

In the example above, we see the sentence “I have no interest in politics.” Models process each word (or “token”) individually. Each word is critical. Imagine if the model could not process a word from the sentence. The model could read, “I have interest in politics” or “I have no interest.” All of these are different than the intended meaning.

Transformer attention was a breakthrough to allow models to understand the meaning an relationships of surrounding words. It is very powerful.

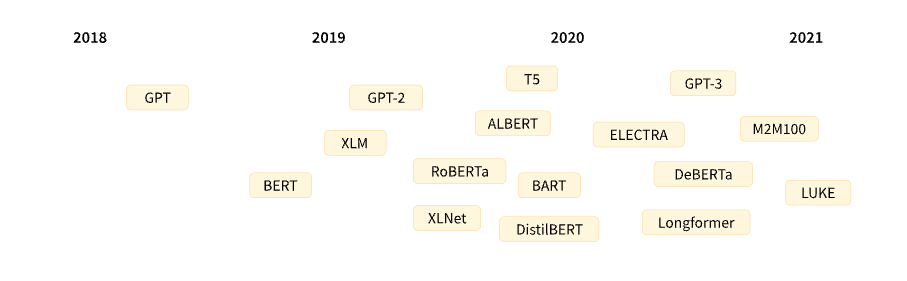

Transformers have also been far more effective in performance and cost. As a result, they are the dominant architecture, and the pace of development has accelerated. Hugging Face has a year-by-year view of Transformer models (above). The full page can be found here.

The data ends in 2021, and since then, the pace has accelerated much more. Today, there are 10s of model providers with commercially accessible LLMs. Here's an example list.

The investment in AI

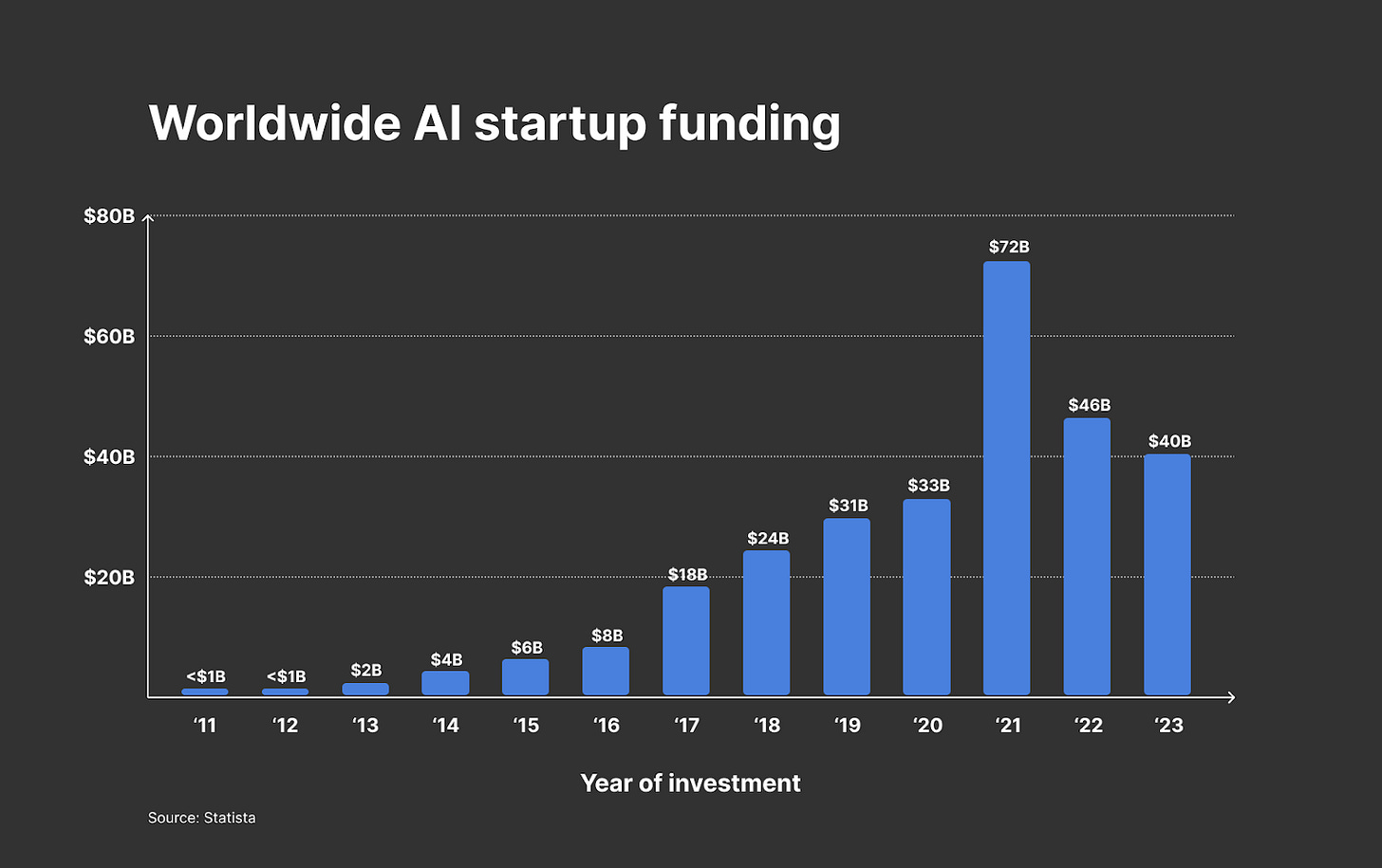

The increase in development in AI has been paired with an increase in overall venture capital investment.

In 2017, venture capital investment jumped +2x to $18B worldwide. Since then, the overall investment has continued to increase, hitting its highest point at $72B in 2021. Over the past two years, there has been a decline (post-ZIRP era), but it has still settled at +$40B per year. That’s 20x more than a decade ago.

Unsurprisingly, this investment has caught the eyes of both early stage builders and enterprise. YCombinator is the #1 startup accelerator in the world, and in their latest cohort (aka batch), 172 of the 242 companies (71%) are building in AI. Including many training their own models.

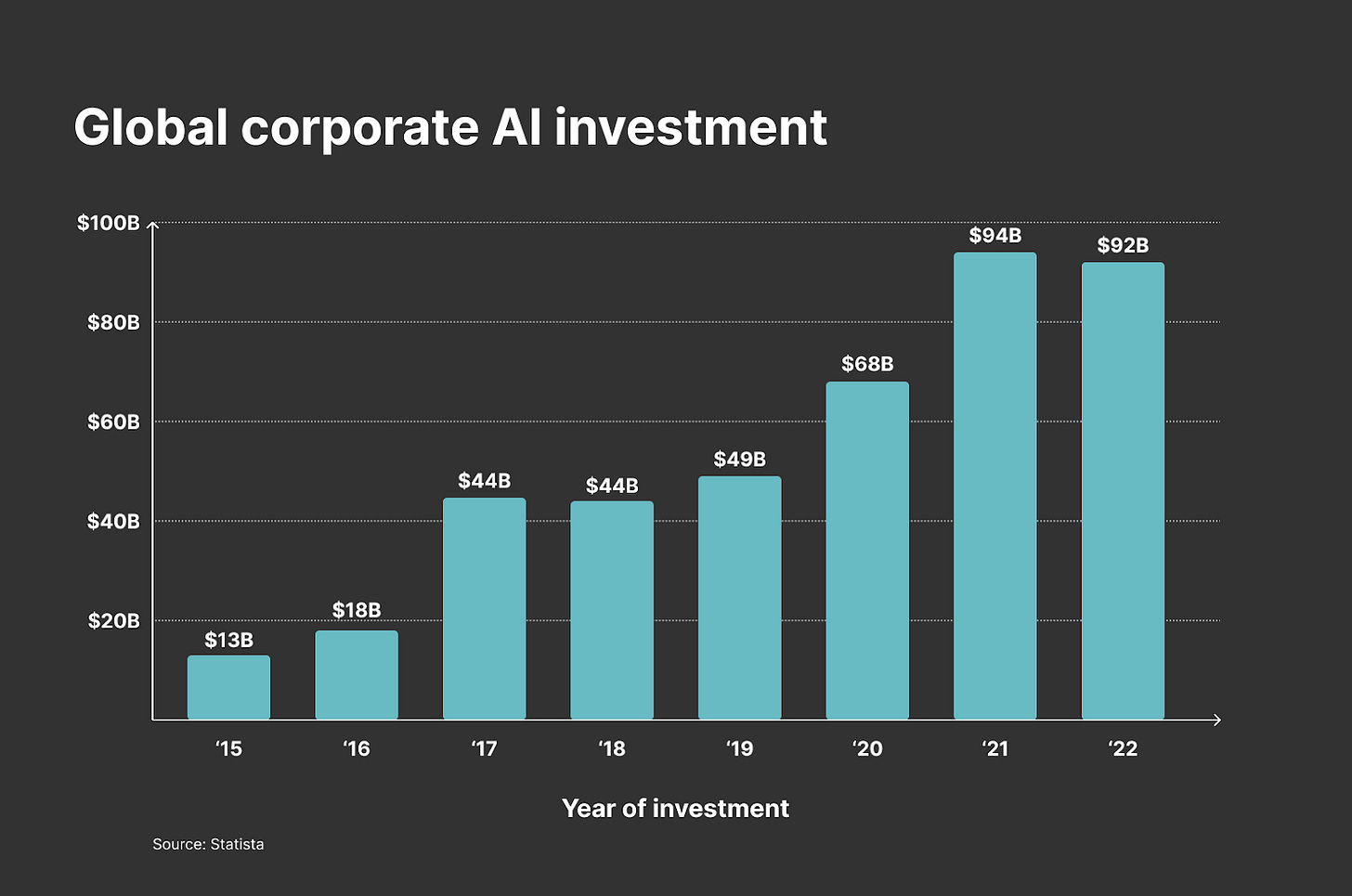

For large companies, AI is one of their primary focuses right now. 17% of Russell 3000 companies mentioned AI in their earnings calls. For almost a decade, companies have been investing in AI, but as of recent, they are estimated to be investing almost $100B per year on AI.

The overall deals are staggering. Here are some key ones to pay attention to:

As crazy as it is to say, this is only getting started it appears. With the pending release of more models, there are even larger value captures.

In the next piece, we will dive a bit deeper into the basics before diving into how an LLM actually works in Part III and Part IV.

Well written Jeff. Congratulations on your child!

JB,

Great read on your post. Research was sound and innovative.