In Part I - The progression of AI, we discussed a brief history of AI and the recent investment. In Part II - Understanding the Basics, we covered some basic definitions like data science, and machine learning, gave an overview of data structures, and then we looked at investment in data infrastructure and Generative AI.

In Part III, we will cover a few more example use cases. Then we will discuss the concept at the root of it all: the neural network.

Example AI use cases and applications

First, it is important to distinguish between AI and Large Language Models (LLMs). LLMs are a type of AI model that is good at natural language processing (NLP). ChatGPT uses an LLM. Because LLMs are more consumer-facing, people mostly think of AI as LLMs, but there are many other applications like vision models, recommendation engines, etc.

With that in mind, let’s go through just a few, non-exhaustive examples of AI:

Translation

Extraction

Generation

Prediction

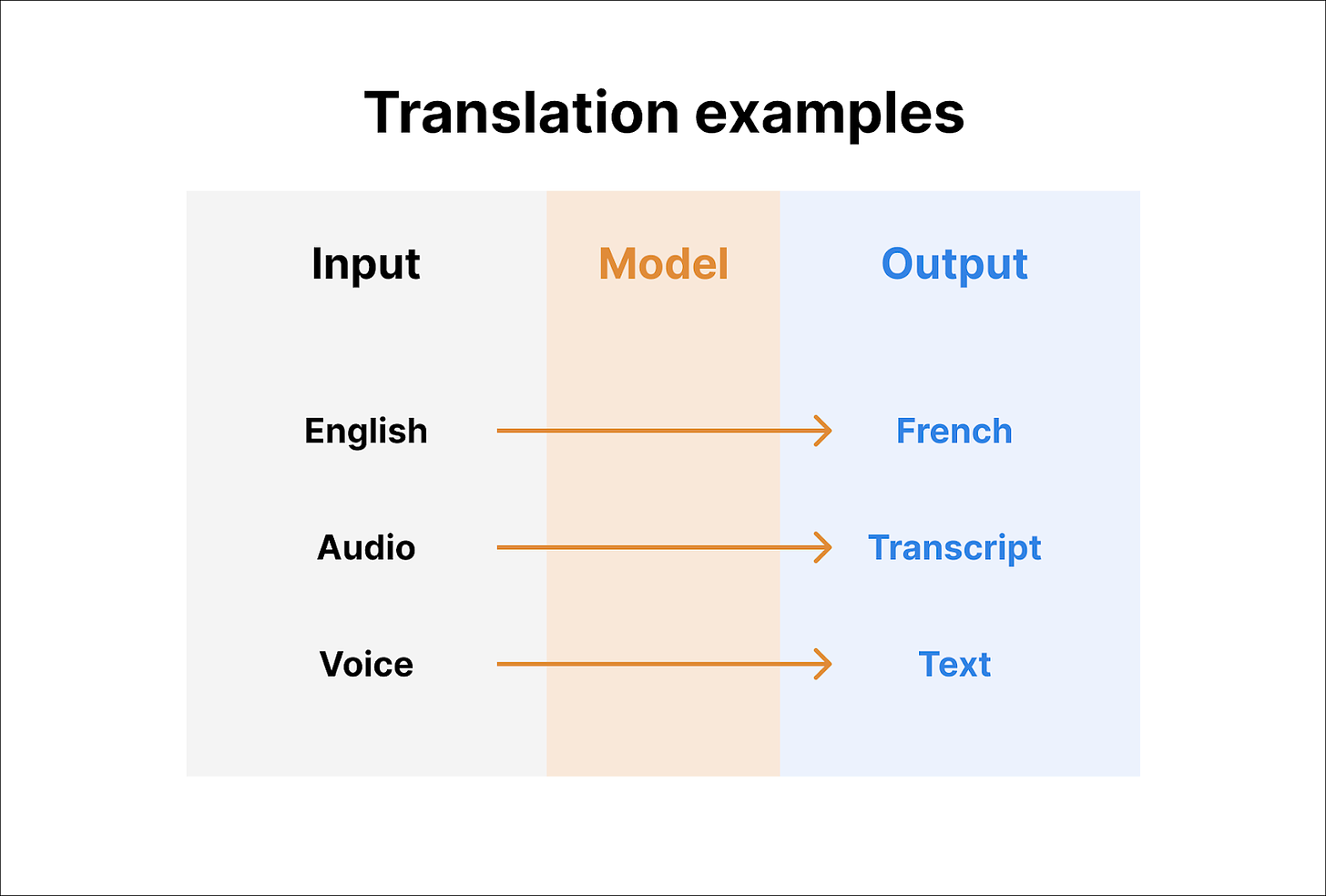

A. Translation

Translation is the act of the AI model converting from one format to another. The obvious example is language translation. Google announced live language translation in Google Meet:

This could be extended to other places. Translating webpages, movies, etc. Language translation is typically expensive, and it is a global barrier for some people. Now, LLMs can do it instantly.

Some other examples are translating formats. For example, Siri converts your voice to text.

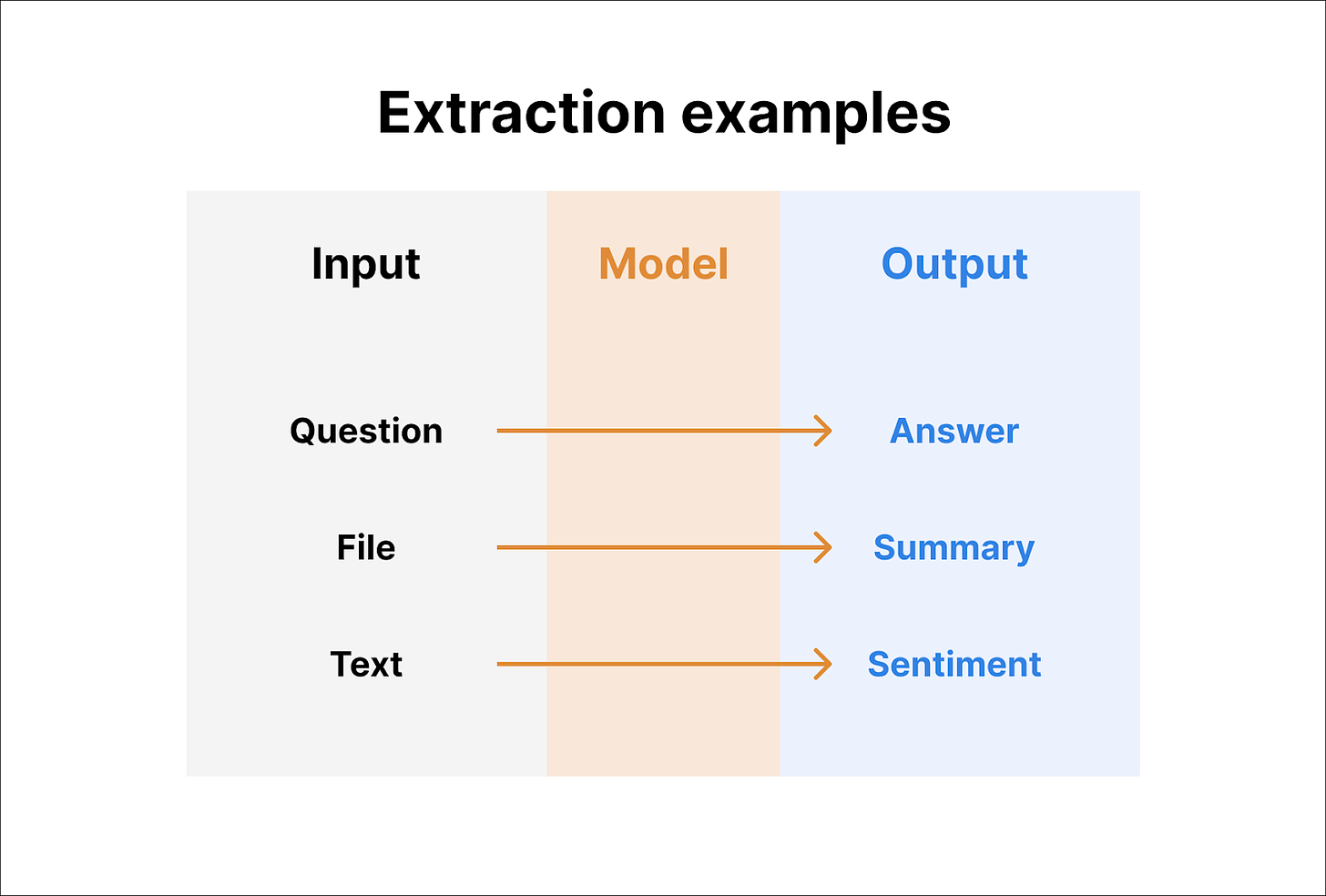

B. Extraction

Extraction involves taking information from the model. Models like ChatGPT have essentially consumed massive amounts of data and compressed it.

An obvious application is question and answer. Here’s an example of me asking ChatGPT about George Washington

Exhibit 3: Example ChatGPT prompt

This was not intentional. I was today years old when I learned George Washington did not have a middle name.

The demo in Exhibit 3 shows that you can ask a pointed question (e.g., Middle name) or an open-ended question (e.g., tell me what you know), and you can extract that information from what the model knows. Note: Models can “hallucinate” and provide false information.

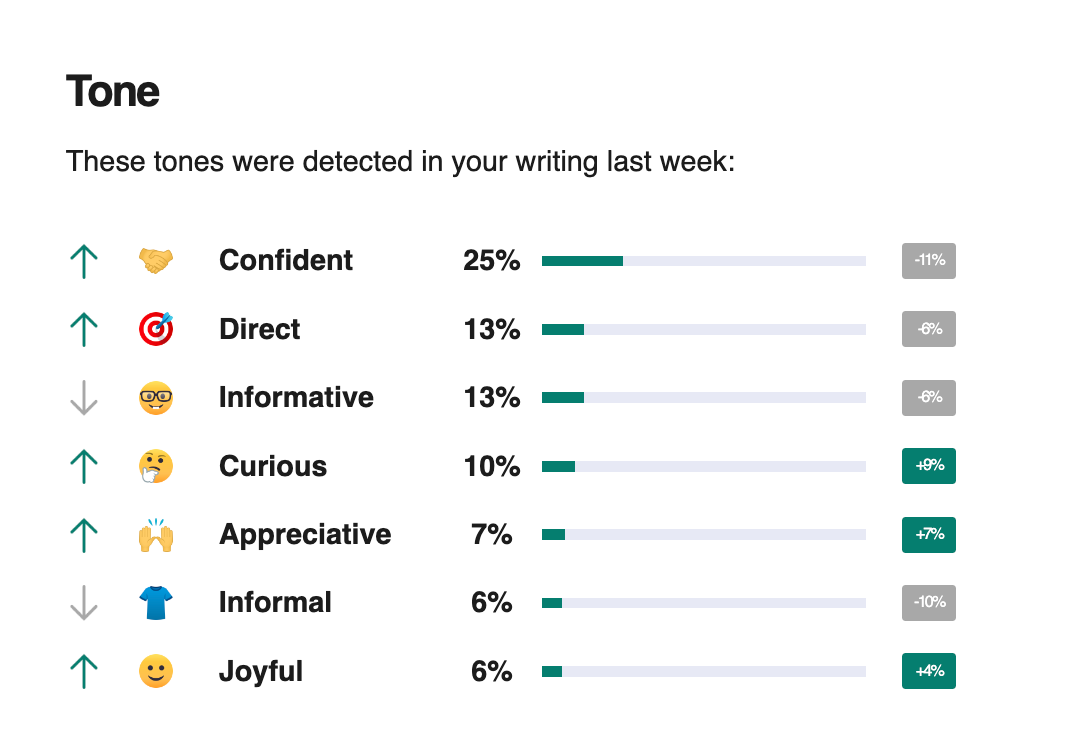

Other examples of extraction could be file summarization or sentiment analysis. For the former, you could upload 200-page PDFs and ask models for a summary. Another example could be an AI model processing text and extracting insights like sentiment. For example, Grammarly sends me this report weekly:

Apparently, my writing is confident, but my confidence is declining? Sad!

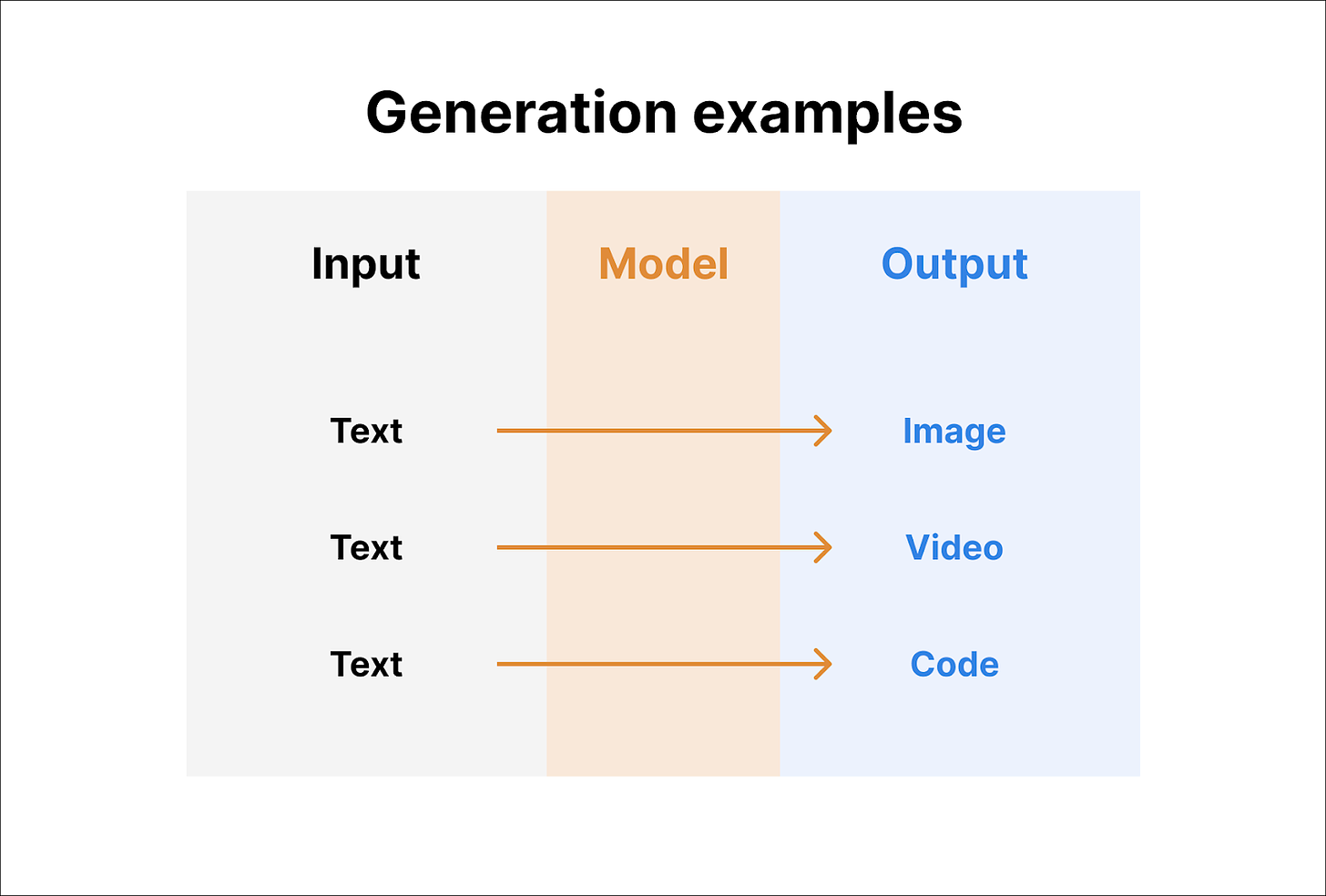

C. Generation

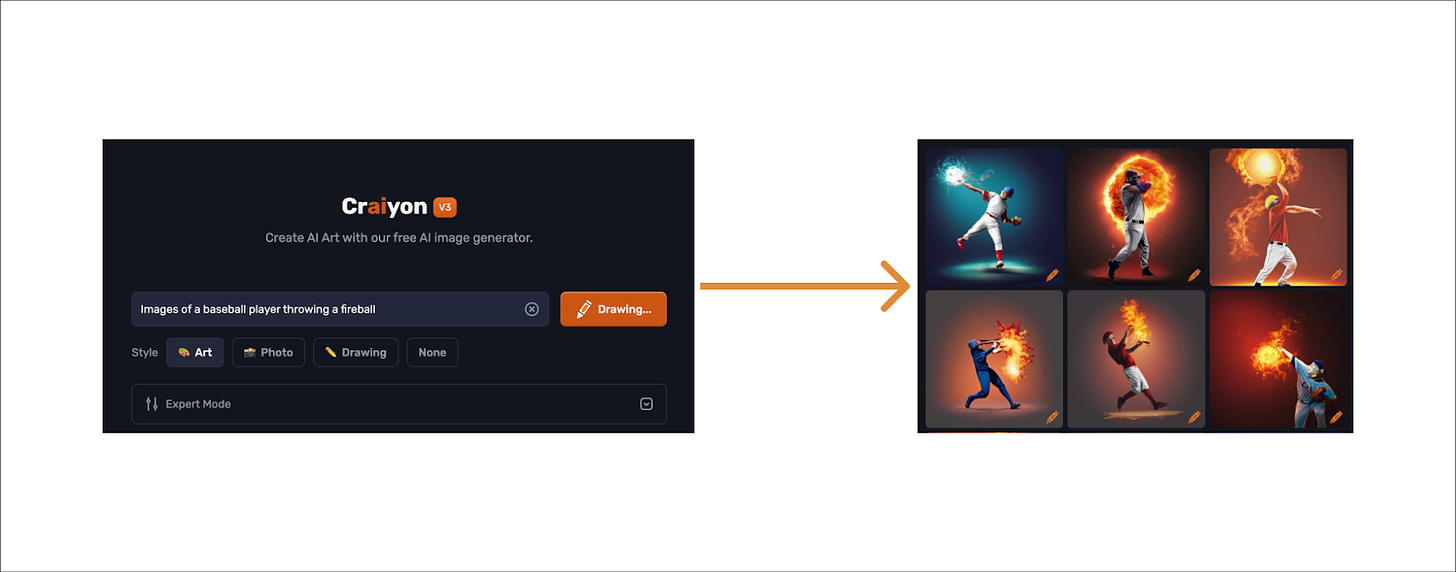

Generation involves taking one input and creating something new from it. A basic example is something like Dall-e or Craiyon where you give a prompt, and it creates an image for you:

You can try Craiyon here. It takes about a minute, and it’s lower quality than other options on the market, but it is free!

But there are a lot of other examples. This is a particularly large and mind-bending space, so I encourage you to check some out:

Marketing copy: Jasper is a company that helps marketers generate marketing copy

Code: In Part II, I shared an example of Replit AI generating code from text

Websites: There are apps like DesignerGPT that generate entire websites from a prompt

Video: Sora and Pika can make unique video clips based on a prompt

Music: Udio just dropped and can generate music from a text prompt

There are many more..

D. Prediction

Prediction involves a model taking in numerous data points (predictor variables) to then predict the outcome of something else (target variable). In essence, all of the other applications work like this. For example, when a model is translating language, it ingests the text of the prompt, and then it predicts each expected new word (more on that later).

For this section, it’s hard to show examples, but think about non-obvious use cases like:

Predicting a purchase: Facebook and Google algorithms will use your demographic information, web history, etc. to predict whether you will buy a certain product… then serve those ads to you.

Demand forecasting: A model could use a variety of economic data, store data, etc. to forecast demand for specific items for a retailer.

Recommendation algorithms: Netflix ingests all of your view history, and then they provide recommendations that they predict you will watch.

There are thousands of examples, and these are probably the most obvious and valuable ways businesses will derive value. A good example is JP Morgan. Jamie Dimon says they have used predictive AI/ML for years, and they have +400 use cases in production, 2,000+ AI/ML experts, and a $15B IT budget.

For a good list of recent AI examples, check out this post. Here are some of my favorite others:

Figure Robotics - Building humanoid robots

Tesla Full-Self Driving - Using imitation learning models to achieve autonomous driving. Currently +1M users.

Roboflow and AMP Robotics - Applying vision models to the industrial sector

Evolv Technology - Advanced security for public spaces

The neural network explained

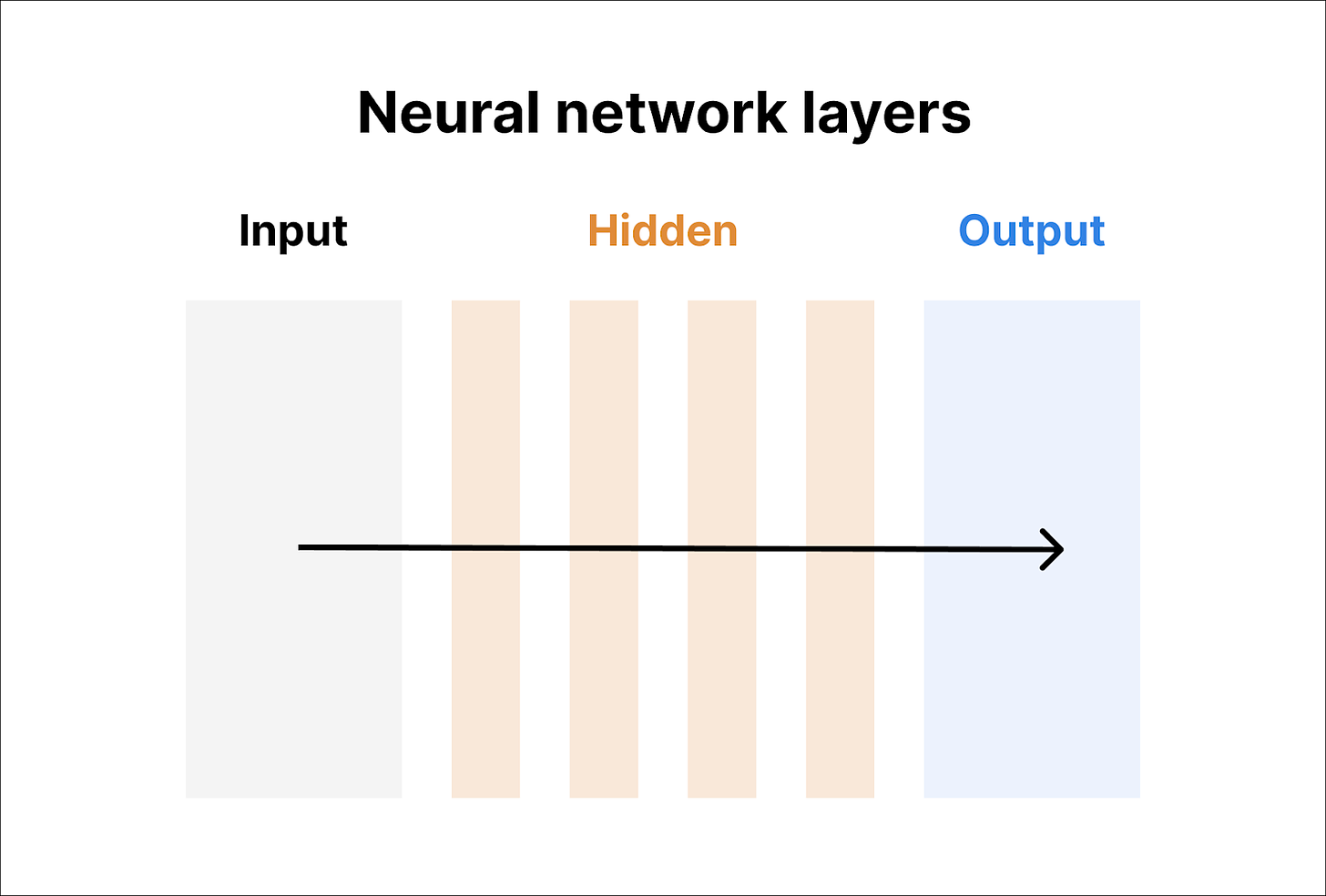

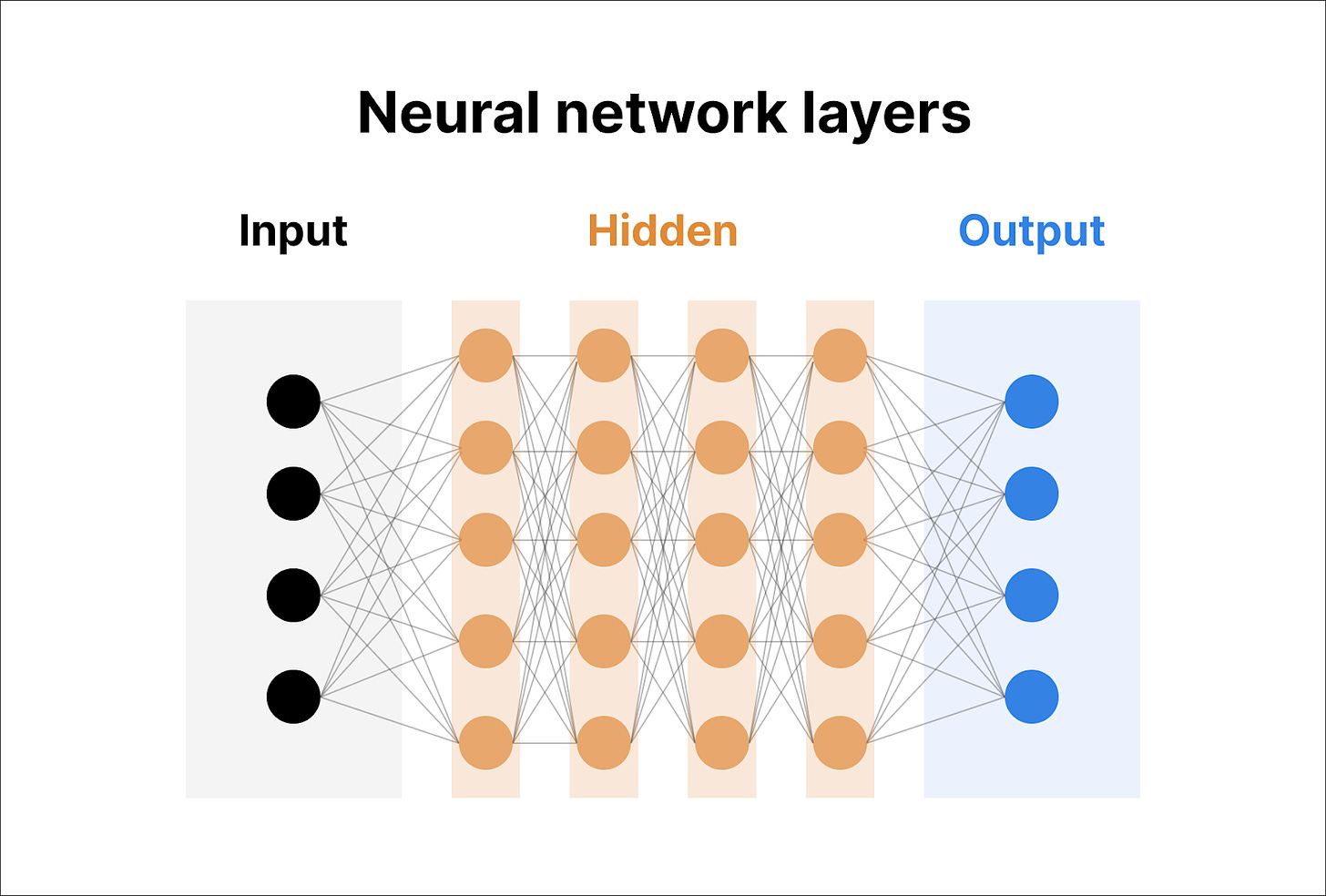

The question is… how do models do this? They use a concept called a neural network. A neural network uses multiple layers of neurons to manage complex calculations. Let’s break down how a neural network works. First, a neural network is made up of layers:

Similar to Exhibits 2, 4, and 7, there is an input layer and an output layer. In between those layers, there are hidden layers. Models can vary in how many hidden layers they have. In future parts, we will discuss some examples of layers, but for now, just understand that each layer represents another layer of calculations that help the model complete its task.

Each layer consists of neurons. They can just as be easily called nodes or processing units. In Exhibit 9, you can see 28 nodes (4 input, 20 hidden, 4 output). Essentially, each line on the graph is a calculation. If you count the lines, you should see 115 total calculations. Math is performed in between each node to determine the output. For now, we can ignore the specifics of the math. We will discuss that later.

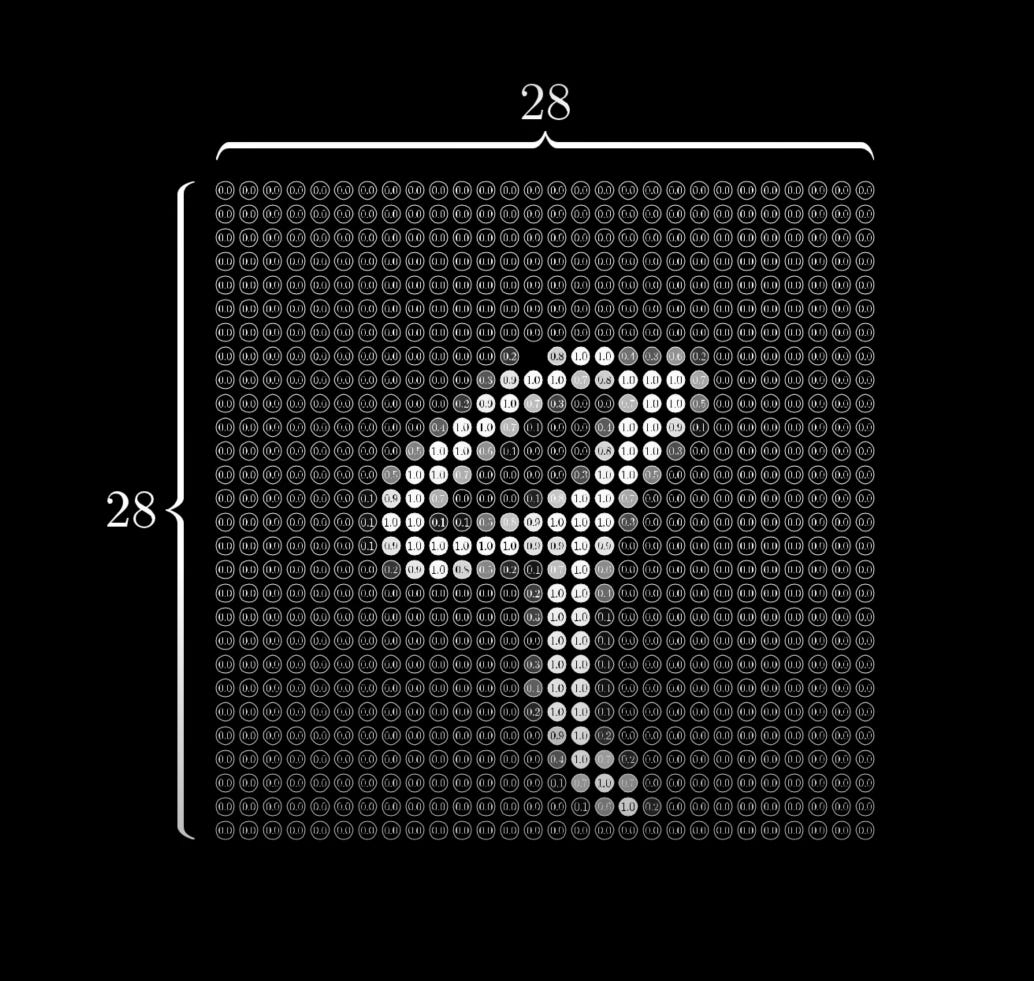

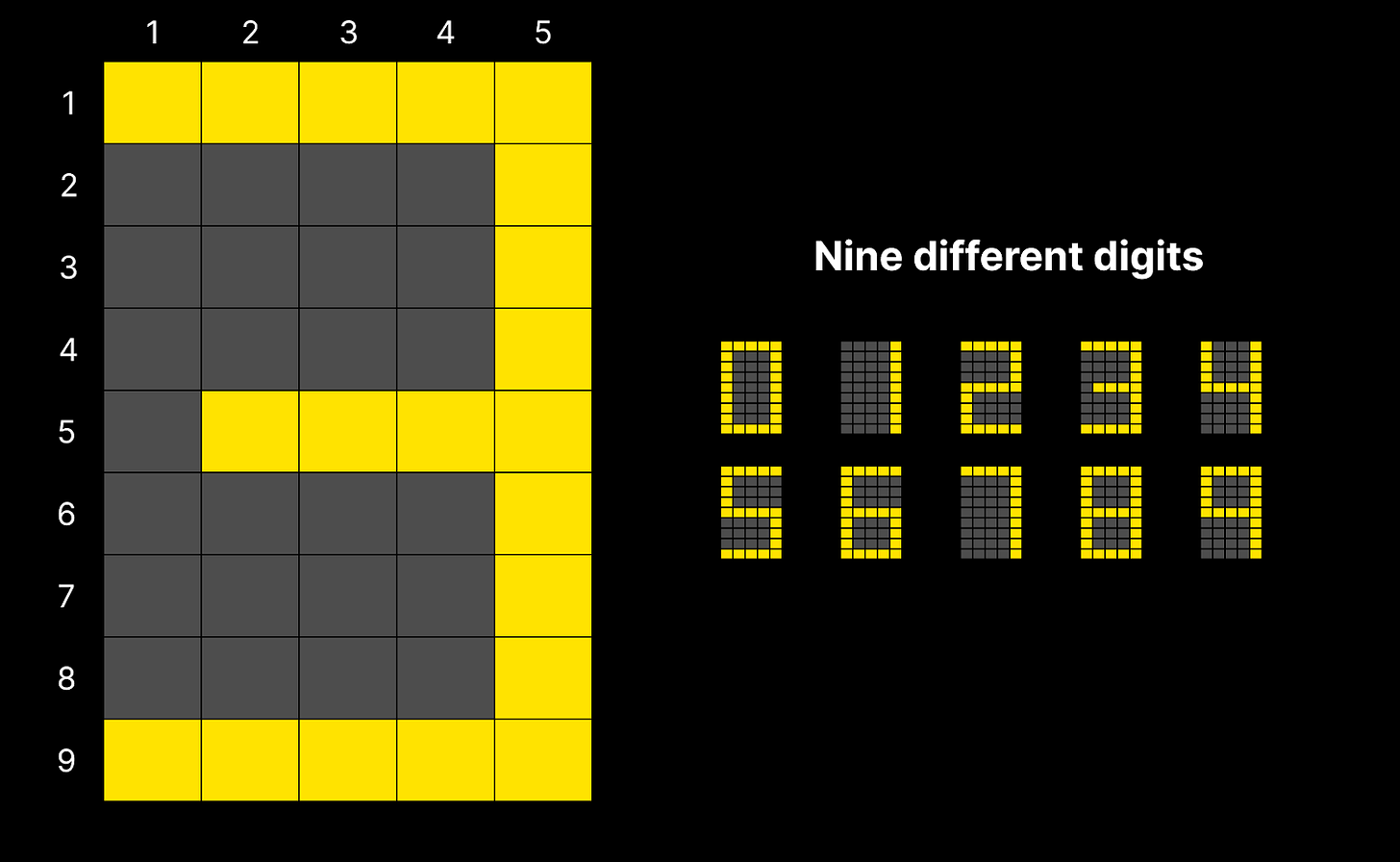

For now, let’s give a visual example that can help you understand the rough concepts of the layers. This example is inspired by 3Blue1Brown’s video, “But what is a neural network?” Let’s say you wanted a computer to see a picture of an old scoreboard and tell you a number on it.

If we look at one digit, there are five columns and nine rows. There are 45 total lightbulbs.

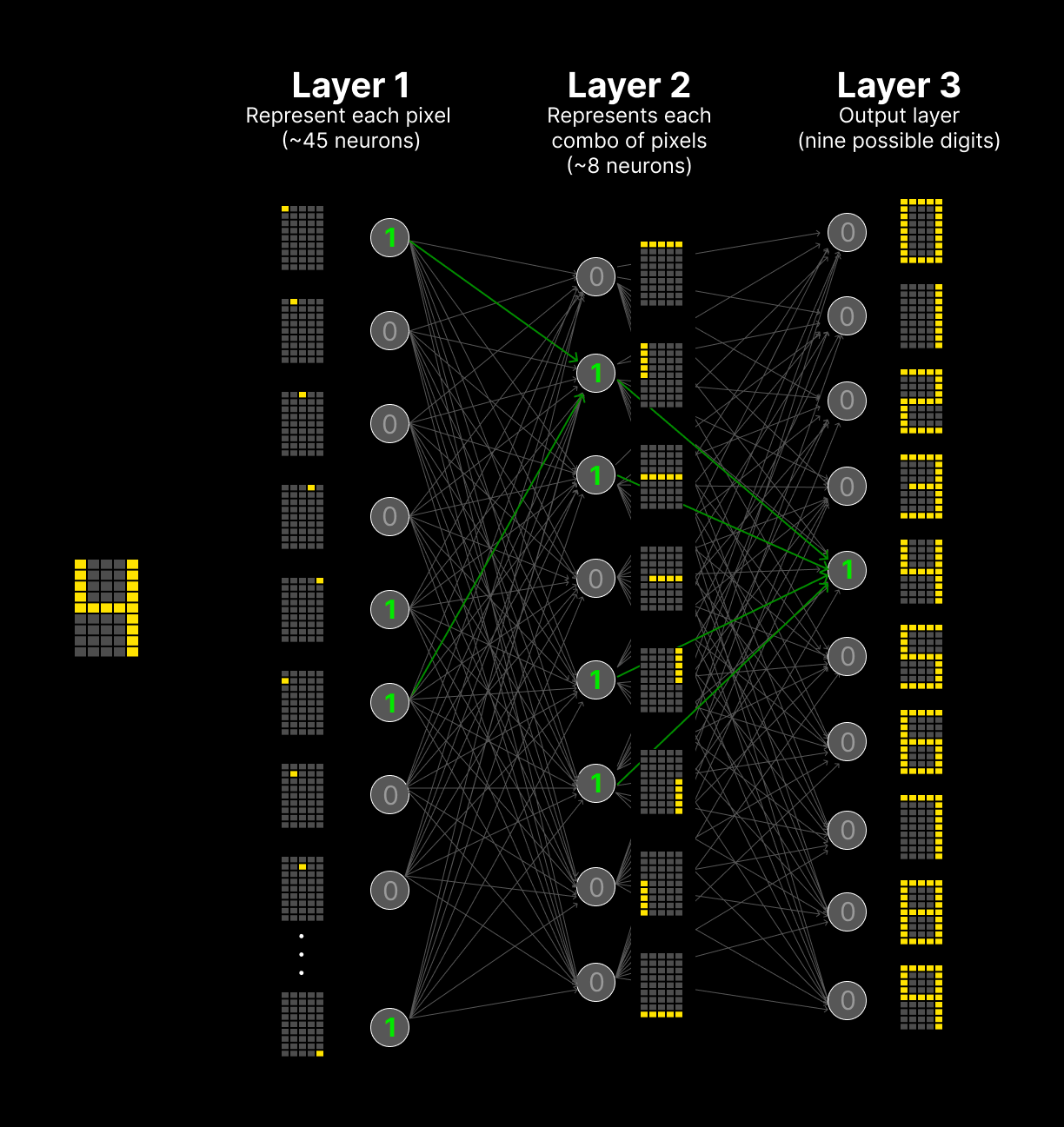

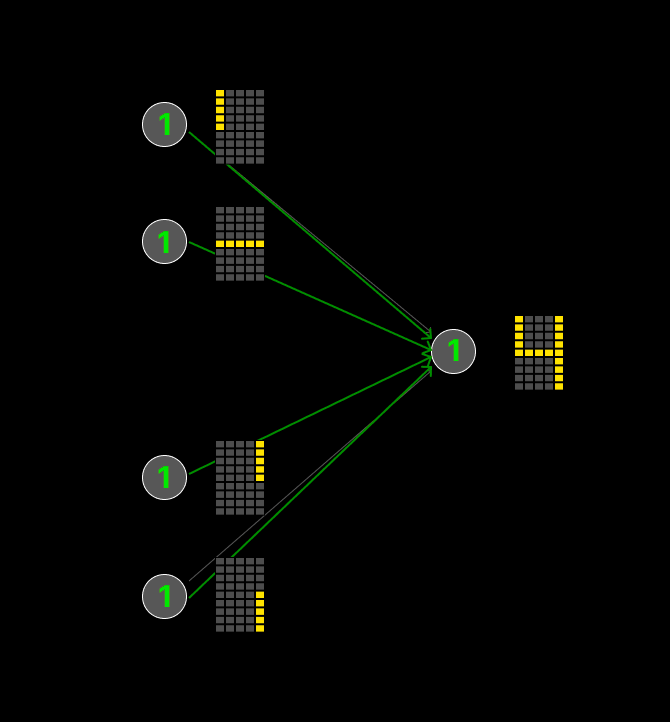

Each digit is a combination of these 45 lightbulbs (as shown on the right-hand side). For a human, brain, you look at it and know the number right away. A computer would use a neural network. If we use the concepts above, we could imagine three very simple layers:

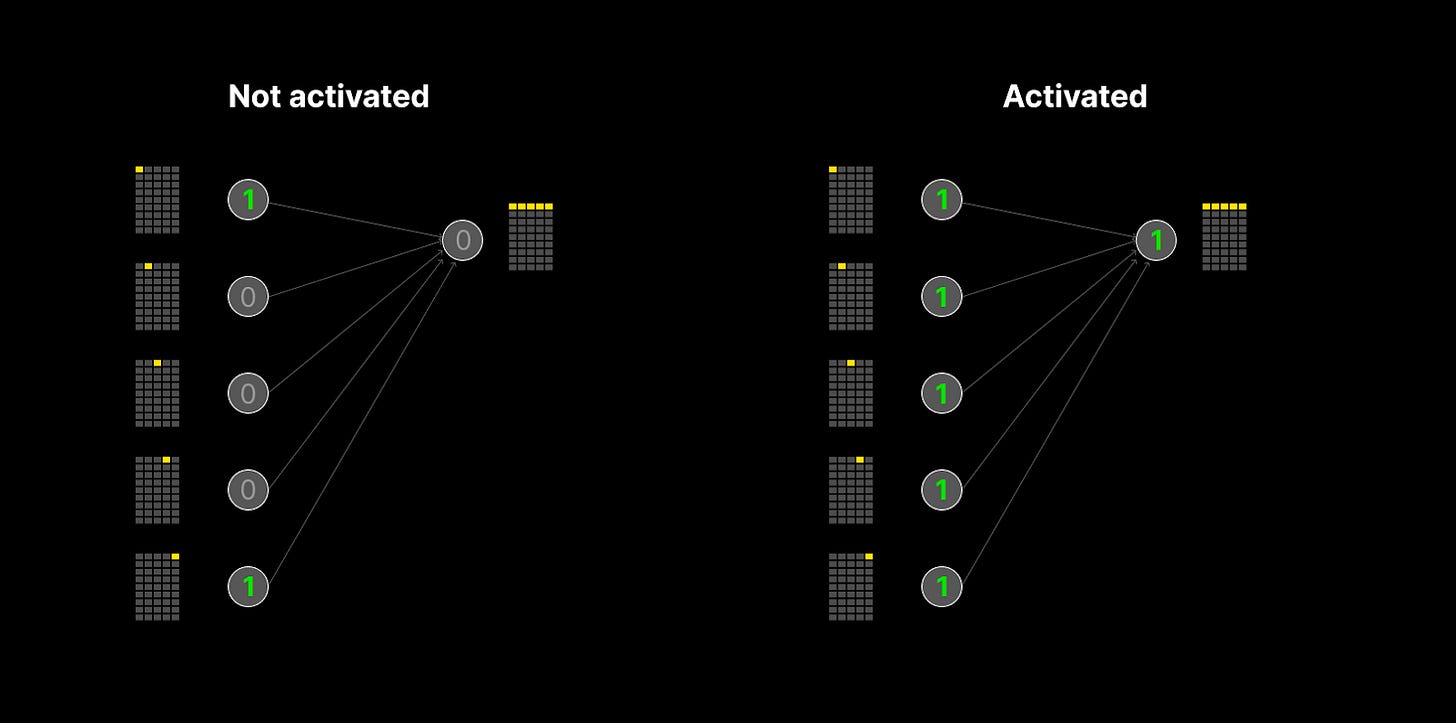

In the first layer, the neural network has 45 input layers. Each one represents one of the lightbulbs. If that lightbulb is on, it will activate (it will equal 1), and if it is off, it will not activate (it will be equal to 0).

The second layer may be made of the different lines used to assemble a number.

So if every individual lightbulb is lit up in the input layer, then that neuron will activate (example on the right). If any of the light bulbs are not lit up, then it will not activate. For the four in Example 11, the top row of light bulbs did not activate.

Finally, Layer 3 is the output. Because Layer 3 sees these four neurons are activated, it knows that has to be a 4. Thus, the computer correctly identifies the number!

I gave a simple example. In 3Blue1Brown’s example, they use:

784 pixels (almost 20x what I did)

Different shades and shapes (ranging 0.00-1.00 instead of 0 and 1)

Even then, things could get much more complex. Imagine vision models or the complex examples above. They deal with millions or billions of variations.

There is much more to cover here. In Part IV, we will discuss training and some of the math behind the neurons. For now, I have included some brief responses to FAQs that I have had as well. Feel free to ask more.

Some frequently asked questions (FAQs)

How do the neurons know whether to activate or not?

It’s all math. We will discuss it more at length in the next newsletter, but each neuron has what’s called an activation function. The activation function is an equation that takes the previous layer as inputs, run the calculations, and delivers an output. The activation function is determined in training, which we will discuss in future parts.

How do people decide on the number of layers?

From what I can tell… this is pretty arbitrary. We will discuss more later, but in training, there is a ton of math to try to make these complex systems accurate. And trying different architectures, layers, etc. is part of the process. The middle layers are very much a black box.

"I was today years old when I learned George Washington did not have a middle name." 🤣

Fun to see you writing. Replit changed A LOT in the last 12 months. Well done to you and the team.